feat(alexa-skill): implementata aws-lambda e alexa-skill per invocazione webhook n8n

This commit is contained in:

1

.gitignore

vendored

Normal file

1

.gitignore

vendored

Normal file

@@ -0,0 +1 @@

|

||||

.venv/

|

||||

353

CHANGELOG.md

353

CHANGELOG.md

@@ -4,6 +4,359 @@ Tutte le modifiche significative al progetto ALPHA_PROJECT sono documentate qui.

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] AWS Lambda Bridge for Alexa Skill "Pompeo"

|

||||

|

||||

Completata la pianificazione e l'implementazione della funzione AWS Lambda che funge da ponte tra la skill Alexa "Pompeo" e il backend n8n. Questo conclude la parte di sviluppo locale della "Phase 4 — Voice Interface (Pompeo)".

|

||||

|

||||

### Pianificazione e Progettazione

|

||||

|

||||

- **Creato `aws-lambda/README.md`**: Un piano di deploy dettagliato che include:

|

||||

- Analisi dei requisiti funzionali e non funzionali (costo zero, distribuzione privata).

|

||||

- Progettazione dell'architettura (Lambda come "thin bridge").

|

||||

- Specifiche per il ruolo IAM (`AWSLambdaBasicExecutionRole`).

|

||||

- Design della logica della funzione in Python.

|

||||

- Una guida passo-passo per la creazione delle risorse su AWS (IAM, Lambda) e sulla Alexa Developer Console.

|

||||

- **Ricerca e Scelte Tecniche**:

|

||||

- Confermato che il piano gratuito di AWS Lambda è sufficiente per un costo nullo.

|

||||

- Stabilito che la distribuzione privata si ottiene mantenendo la skill in stato "In Sviluppo", senza pubblicarla.

|

||||

- Definito l'uso di un "Invocation Name" di due parole (es. "maggiordomo Pompeo") per rispettare le policy di Alexa.

|

||||

|

||||

### Implementazione

|

||||

|

||||

- **Struttura del Progetto**: Creata la sottocartella `aws-lambda/src` per il codice sorgente.

|

||||

- **Codice Sorgente**: Implementata la funzione `lambda_handler` in `aws-lambda/src/index.py`, che inoltra le richieste Alexa al webhook n8n in modo sicuro.

|

||||

- **Dipendenze**: Definite le dipendenze (`requests`) in `aws-lambda/src/requirements.txt`.

|

||||

- **Pacchetto di Deploy**: Creato l'ambiente virtuale Python e installate le dipendenze direttamente nella cartella `src` per preparare il pacchetto di deploy.

|

||||

- **Artefatto Finale**: Generato il file `aws-lambda/pompeo-alexa-bridge.zip`, pronto per essere caricato sulla console AWS Lambda.

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] Jellyfin Playback Agent — Blocco A completato

|

||||

|

||||

### Nuovo workflow n8n

|

||||

|

||||

- **`🎬 Pompeo — Jellyfin Playback [Webhook]`** (`AyrKWvboPldzZPsM`): riceve webhook da Jellyfin (PlaybackStart / PlaybackStop), filtra per utente `martin` (userId whitelist), e scrive su Postgres:

|

||||

- **PlaybackStart** → INSERT in `behavioral_context` (`event_type=watching_media`, `do_not_disturb=true`, notes con item/device/session_id) + INSERT in `agent_messages` (soggetto `▶️ <titolo> (<device>)`)

|

||||

- **PlaybackStop** → UPDATE su riga aperta più recente (`end_at=now()`, `do_not_disturb=false`) + INSERT in `agent_messages` (soggetto `⏹️ ...`)

|

||||

|

||||

### Bug risolti (infrastruttura n8n)

|

||||

|

||||

- **Webhook path n8n v2**: per registrare un webhook con path statico via API, il campo `webhookId` va impostato come attributo top-level del nodo (non dentro `parameters`). Senza di esso n8n genera il path dinamico `{workflowId}/{nodeName}/{path}` che il webhook pod non carica correttamente in queue mode.

|

||||

- **SSL Postgres / Patroni**: le credential Postgres create via API usavano SSL con `rejectUnauthorized=true` di default, incompatibile con il certificato self-signed di Patroni. Fix: aggiunto `NODE_TLS_REJECT_UNAUTHORIZED=0` ai deployment `n8n-app` e `n8n-app-worker`.

|

||||

- **queryParams Postgres node**: `additionalFields.queryParams` con espressioni `$json.*` non funziona correttamente in n8n v2.5.2. Fix: valori inline nella SQL via espressioni n8n `{{ $json.field }}`.

|

||||

|

||||

### Configurazione Jellyfin

|

||||

|

||||

- Webhook plugin Jellyfin configurato su `http://n8n-app-webhook.automation.svc.cluster.local/webhook/jellyfin-playback` (POST, eventi: PlaybackStart + PlaybackStop)

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] Daily Digest — integrazione memoria Postgres

|

||||

|

||||

### Modifiche al workflow `📬 Gmail — Daily Digest [Schedule]` (`1lIKvVJQIcva30YM`)

|

||||

|

||||

Aggiunto branch parallelo di salvataggio fatti in memoria dopo la classificazione GPT:

|

||||

|

||||

```

|

||||

Parse risposta GPT-4.1 ──┬──> Telegram - Invia Report (invariato)

|

||||

├──> Dividi Email (invariato)

|

||||

└──> 🧠 Estrai Fatti ──> 🔀 Ha Fatti? ──> 💾 Upsert Memoria

|

||||

```

|

||||

|

||||

**`🧠 Estrai Fatti` (Code):**

|

||||

- Filtra le email con `action != 'trash'` e summary non vuoto

|

||||

- Chiama GPT-4.1 in batch per estrarre per ogni email: `fact`, `category`, `ttl_days`, `pompeo_note`, `entity_refs`

|

||||

- Calcola `expires_at` da `ttl_days` (14gg prenotazioni, 45gg bollette, 90gg lavoro/condominio, 30gg default)

|

||||

- Restituisce un item per ogni fatto da persistere

|

||||

|

||||

**`💾 Upsert Memoria` (Postgres node → `mRqzxhSboGscolqI`):**

|

||||

- `INSERT INTO memory_facts` con `source='email'`, `source_ref=threadId`

|

||||

- `ON CONFLICT ON CONSTRAINT memory_facts_dedup_idx DO UPDATE` → aggiorna se lo stesso thread viene riprocessato

|

||||

- Campi salvati: `category`, `subject`, `detail` (JSONB), `action_required`, `action_text`, `pompeo_note`, `entity_refs`, `expires_at`

|

||||

|

||||

### Fix contestuale

|

||||

|

||||

- Aggiunto `newer_than:1d` alla query Gmail su entrambi i nodi fetch — evitava di rifetchare email vecchie di mesi non marcate `Processed`

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] Schema DB v2 — contacts, memory_facts_archive, entity_refs

|

||||

|

||||

### Nuove tabelle

|

||||

|

||||

- **`contacts`**: grafo di persone multi-tenant. Ogni riga modella una relazione `user_id → subject` con `relation`, `city`, `country`, `profession`, `aliases[]`, `born_year`, `details` (narrativa libera per LLM) e `metadata` JSONB. Traversabile ricorsivamente per inferire relazioni di secondo grado (es. Martin → zio Mujsi → figlio Euris → cugino di primo grado da parte di madre). Indici GIN su `subject` (trigram) e `aliases` per similarity search.

|

||||

- **`memory_facts_archive`**: destinazione del cleanup settimanale dei fatti scaduti. Struttura identica a `memory_facts` + `archived_at` + `archive_reason` (`expired` | `superseded` | `merged`). I fatti archiviati vengono poi condensati in un episodio Qdrant settimanale.

|

||||

|

||||

### Colonne aggiunte a `memory_facts`

|

||||

|

||||

- **`pompeo_note TEXT`**: inner monologue dell'LLM al momento dell'insert — il "perché" del fatto (già in uso nel Calendar Agent, ora standardizzato su tutti i source).

|

||||

- **`entity_refs JSONB`**: entità estratte dal fatto strutturato — `{people: [], places: [], products: [], amounts: []}`. Permette query SQL su persone/luoghi senza full-text scan (es. `entity_refs->'people' ? 'euris vruzhaj'`).

|

||||

|

||||

### Applicato a

|

||||

|

||||

- `alpha/db/postgres.sql` aggiornato (schema v2)

|

||||

- Live su Patroni primary (`postgres-1`, namespace `persistence`, DB `pompeo`)

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] Actual Budget — Import Estratto Conto via Telegram

|

||||

|

||||

### Nuovi workflow

|

||||

|

||||

- **`💰 Actual — Import Estratto Conto [Telegram]`** (`qtvB3r0cgejyCxUp`): importa l'estratto conto Banca Sella (CSV) in Actual Budget tramite Telegram.

|

||||

- Trigger: documento Telegram con caption `Estratto conto`

|

||||

- Parse CSV Banca Sella (separatore `;`, date `gg/mm/aaaa`, importi con `.` decimale)

|

||||

- Skip automatico di `SALDO FINALE` e `SALDO INIZIALE`

|

||||

- Classificazione GPT-4.1 in batch da 30 transazioni: assegna payee e categoria, crea automaticamente i mancanti su Actual

|

||||

- Import via `/transactions/import` con dedup nativo tramite `imported_id` (pattern `banca-sella-{Id}` o hash fallback)

|

||||

- Report Telegram con nuove transazioni importate, già presenti e totale CSV

|

||||

- **`⏰ Actual — Reminder Estratto Conto [Schedule]`** (`w0oJ1i6sESvaB5W1`): reminder giornaliero (09:00) su Telegram se il task Google "Actual - Estratto conto" nella lista "Finanze" è scaduto.

|

||||

|

||||

### Note tecniche

|

||||

|

||||

- Binary data letta con `getBinaryDataBuffer()` (compatibile con filesystem binary mode di n8n)

|

||||

- Loop GPT gestito con iterazione interna nel Code node (no `splitInBatches` — instabile con input multipli)

|

||||

- Payee/categorie mancanti creati al volo e riutilizzati nei batch successivi della stessa run

|

||||

- Dedup Actual: `added` = nuove, `updated` = già presenti

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] Calendar Agent — fix sincronizzazione e schedule

|

||||

|

||||

### Problemi risolti

|

||||

|

||||

- **`ON CONFLICT DO NOTHING` → `DO UPDATE`**: gli eventi modificati (orario, titolo) venivano ignorati. Ora vengono aggiornati in Postgres.

|

||||

- **Cleanup eventi cancellati**: aggiunto step `🗑️ Cleanup Cancellati` che esegue `DELETE FROM memory_facts WHERE source_ref NOT IN (UID attuali da HA)` per la finestra 7 giorni. Se Martin cancella un meeting, sparisce da Postgres al prossimo run.

|

||||

- **Schedule `*/30 * * * *`**: da cron 06:30 giornaliero a ogni 30 minuti — il calendario Postgres è sempre allineato alla source of truth (HA/Google Calendar).

|

||||

|

||||

### Flusso aggiornato

|

||||

|

||||

```

|

||||

... → 📋 Parse GPT → 🗑️ Cleanup Cancellati → 🔀 Riemetti → 💾 Upsert → 📦 → 📱

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-20] Calendar Agent — primo workflow Pompeo in produzione

|

||||

|

||||

### Cosa è stato fatto

|

||||

|

||||

Primo agente Pompeo deployato e attivo su n8n: `📅 Pompeo — Calendar Agent [Schedule]` (ID `4ZIEGck9n4l5qaDt`).

|

||||

|

||||

### Design

|

||||

|

||||

- **Sorgente dati**: Home Assistant REST API usata come proxy Google Calendar — evita OAuth Google diretto in n8n e funziona per tutti i 25 calendari registrati in HA.

|

||||

- **Calendari tracciati** (12): Lavoro, Famiglia, Spazzatura, Pulizie, Formula 1, WEC, Inter, Compleanni, Varie, Festività Italia, Films (Radarr), Serie TV (Sonarr).

|

||||

- **LLM enrichment**: GPT-4.1 (via Copilot) classifica ogni evento: category, action_required, do_not_disturb, priority, behavioral_context, pompeo_note.

|

||||

- **Dedup**: `memory_facts.source_ref` = HA event UID; `ON CONFLICT DO NOTHING` su indice unico parziale.

|

||||

- **Telegram briefing**: ogni mattina alle 06:30, riepilogo eventi prossimi 7 giorni raggruppati per calendario.

|

||||

|

||||

### Migrazioni DB applicate

|

||||

|

||||

- `ALTER TABLE memory_facts ADD COLUMN source_ref TEXT` — colonna per ID esterno di dedup

|

||||

- `CREATE UNIQUE INDEX memory_facts_dedup_idx ON memory_facts (user_id, source, source_ref) WHERE source_ref IS NOT NULL`

|

||||

- `CREATE INDEX idx_memory_facts_source_ref ON memory_facts (source_ref) WHERE source_ref IS NOT NULL`

|

||||

|

||||

### Credential n8n create

|

||||

|

||||

| ID | Nome | Tipo |

|

||||

|---|---|---|

|

||||

| `u0JCseXGnDG5hS9F` | Home Assistant API | HTTP Header Auth |

|

||||

| `mRqzxhSboGscolqI` | Pompeo — PostgreSQL | Postgres (pompeo/martin) |

|

||||

|

||||

### Flusso workflow

|

||||

|

||||

```

|

||||

⏰ Schedule (06:30) → 📅 Range → 🔑 Token Copilot

|

||||

→ 📋 Calendari (12 items) → 📡 HA Fetch (×12) → 🏷️ Estrai + Tag

|

||||

→ 📝 Prompt (dedup) → 🤖 GPT-4.1 → 📋 Parse

|

||||

→ 💾 Postgres Upsert (memory_facts) → 📦 Aggrega → 📱 Telegram

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] ADR — Message Broker: nessun broker dedicato

|

||||

|

||||

### Decisione

|

||||

|

||||

**Non verrà deployato un message broker dedicato** (né NATS JetStream né Redis Streams). Il blackboard pattern viene implementato interamente su PostgreSQL + webhook n8n.

|

||||

|

||||

### Ragionamento

|

||||

|

||||

Al momento della progettazione iniziale, il broker era necessario per disaccoppiare gli agenti dall'Arbiter. Con l'introduzione della tabella `agent_messages` nel database `pompeo`, questo obiettivo è già raggiunto:

|

||||

|

||||

```

|

||||

Agente n8n → INSERT agent_messages (arbiter_decision = NULL)

|

||||

Arbiter → SELECT WHERE arbiter_decision IS NULL (polling a cron)

|

||||

→ UPDATE arbiter_decision = 'notify' | 'defer' | 'discard'

|

||||

```

|

||||

|

||||

Il flusso high-priority (bypass immediato dell'Arbiter) viene gestito con una chiamata diretta al **webhook n8n dell'Arbiter** da parte dell'agente — zero infrastruttura aggiuntiva.

|

||||

|

||||

### Alternative valutate

|

||||

|

||||

| Opzione | Esito | Motivazione |

|

||||

|---|---|---|

|

||||

| `agent_messages` su PostgreSQL | ✅ **Adottata** | Già deployata, persistente, queryabile, audit log gratuito |

|

||||

| Redis Streams | ⏸ Rimandato | Già in cluster, valutabile se volume cresce |

|

||||

| NATS JetStream | ❌ Scartato | Nuovo componente da operare, overkill per il volume attuale (pochi msg/ora) e per il caso d'uso single-household |

|

||||

|

||||

### Impatto su README.md

|

||||

|

||||

La sezione "Message Broker (Blackboard Pattern)" rimane valida concettualmente. Il campo `agent` e il message schema definiti nel README vengono rispettati nella tabella `agent_messages` — cambia solo il mezzo di trasporto (Postgres invece di NATS/Redis).

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] PostgreSQL — Database "pompeo" e schema ALPHA_PROJECT

|

||||

|

||||

### Overview

|

||||

|

||||

Creato il database `pompeo` sul cluster Patroni (namespace `persistence`) e applicato lo schema iniziale per la memoria strutturata di Pompeo. Seconda milestone della Phase 0 — Infrastructure Bootstrap.

|

||||

|

||||

---

|

||||

|

||||

### Modifica manifest Patroni

|

||||

|

||||

Aggiunto `pompeo: martin` nella sezione `databases` di `infra/cluster/persistence/patroni/postgres.yaml`. Il database è stato creato automaticamente dallo Zalando Operator senza downtime sugli altri database.

|

||||

|

||||

Script DDL idempotente disponibile in: `alpha/db/postgres.sql`

|

||||

|

||||

---

|

||||

|

||||

### Design decision — Multi-tenancy anche in PostgreSQL

|

||||

|

||||

Coerentemente con la scelta adottata per Qdrant, tutte le tabelle includono il campo `user_id TEXT NOT NULL DEFAULT 'martin'`. I valori `'martin'` e `'shared'` sono seedati in `user_profile` come utenti iniziali del sistema.

|

||||

|

||||

Aggiungere un nuovo utente in futuro non richiede modifiche allo schema — è sufficiente inserire una riga in `user_profile` e usare il nuovo `user_id` negli INSERT.

|

||||

|

||||

---

|

||||

|

||||

### Design decision — agent_messages come blackboard persistente

|

||||

|

||||

La tabella `agent_messages` implementa il **blackboard pattern** del message broker: ogni agente n8n inserisce le proprie osservazioni con `arbiter_decision = NULL` (pending). Il Proactive Arbiter legge i messaggi in coda, decide (`notify` / `defer` / `discard`) e aggiorna `arbiter_decision`, `arbiter_reason` e `processed_at`.

|

||||

|

||||

Rispetto a usare solo NATS/Redis come broker, questo approccio garantisce un **audit log permanente** di tutte le osservazioni e decisioni, interrogabile via SQL per debug, tuning e analisi storiche.

|

||||

|

||||

---

|

||||

|

||||

### Schema creato

|

||||

|

||||

**5 tabelle** nel database `pompeo`:

|

||||

|

||||

| Tabella | Ruolo |

|

||||

|---|---|

|

||||

| `user_profile` | Preferenze statiche per utente (lingua, timezone, stile notifiche, quiet hours). Seed: `martin`, `shared` |

|

||||

| `memory_facts` | Fatti episodici prodotti da tutti gli agenti, con TTL (`expires_at`) e riferimento al punto Qdrant (`qdrant_id`) |

|

||||

| `finance_documents` | Documenti finanziari strutturati: bollette, fatture, cedolini. Include `raw_text` per embedding |

|

||||

| `behavioral_context` | Contesto IoT/comportamentale per l'Arbiter: DND, home presence, tipo evento |

|

||||

| `agent_messages` | Blackboard del message broker — osservazioni agenti + decisioni Arbiter |

|

||||

|

||||

**15 index** totali:

|

||||

|

||||

| Index | Tabella | Tipo |

|

||||

|---|---|---|

|

||||

| `idx_memory_facts_user_source_cat` | `memory_facts` | `(user_id, source, category)` |

|

||||

| `idx_memory_facts_expires` | `memory_facts` | `(expires_at)` WHERE NOT NULL |

|

||||

| `idx_memory_facts_action` | `memory_facts` | `(user_id, action_required)` WHERE true |

|

||||

| `idx_finance_docs_user_date` | `finance_documents` | `(user_id, doc_date DESC)` |

|

||||

| `idx_finance_docs_correspondent` | `finance_documents` | `(user_id, correspondent)` |

|

||||

| `idx_behavioral_ctx_user_time` | `behavioral_context` | `(user_id, start_at, end_at)` |

|

||||

| `idx_behavioral_ctx_dnd` | `behavioral_context` | `(user_id, do_not_disturb)` WHERE true |

|

||||

| `idx_agent_msgs_pending` | `agent_messages` | `(user_id, priority, created_at)` WHERE pending |

|

||||

| `idx_agent_msgs_agent_type` | `agent_messages` | `(agent, event_type, created_at)` |

|

||||

| `idx_agent_msgs_expires` | `agent_messages` | `(expires_at)` WHERE pending AND NOT NULL |

|

||||

|

||||

---

|

||||

|

||||

### Phase 0 — Stato aggiornato

|

||||

|

||||

- [x] ~~Deploy **Qdrant** sul cluster~~ ✅ 2026-03-21

|

||||

- [x] ~~Collections Qdrant con multi-tenancy `user_id`~~ ✅ 2026-03-21

|

||||

- [x] ~~Payload indexes Qdrant~~ ✅ 2026-03-21

|

||||

- [x] ~~Database `pompeo` + schema PostgreSQL~~ ✅ 2026-03-21

|

||||

- [ ] Verify embedding endpoint via Copilot (`text-embedding-3-small`)

|

||||

- [ ] Migrazione a Ollama `nomic-embed-text` (quando LLM server è online)

|

||||

|

||||

---

|

||||

|

||||

## [2026-03-21] Qdrant — Deploy e setup collections (Phase 0)

|

||||

|

||||

### Overview

|

||||

|

||||

Completato il deploy di **Qdrant v1.17.0** sul cluster Kubernetes (namespace `persistence`) e la creazione delle collections per la memoria semantica di Pompeo. Questa è la prima milestone della Phase 0 — Infrastructure Bootstrap.

|

||||

|

||||

---

|

||||

|

||||

### Deploy infrastruttura

|

||||

|

||||

Qdrant deployato via Helm chart ufficiale (`qdrant/qdrant`) nel namespace `persistence`, coerente con il pattern infrastrutturale esistente (Longhorn storage, Sealed Secrets, ServiceMonitor Prometheus).

|

||||

|

||||

**Risorse create:**

|

||||

|

||||

| Risorsa | Dettaglio |

|

||||

|---|---|

|

||||

| StatefulSet `qdrant` | 1/1 pod Running, image `qdrant/qdrant:v1.17.0` |

|

||||

| PVC `qdrant-storage-qdrant-0` | 20Gi Longhorn RWO |

|

||||

| Service `qdrant` | ClusterIP — porte 6333 (REST), 6334 (gRPC), 6335 (p2p) |

|

||||

| SealedSecret `qdrant-api-secret` | API key cifrata, namespace `persistence` |

|

||||

| ServiceMonitor `qdrant` | Prometheus scraping su `:6333/metrics`, label `release: monitoring` |

|

||||

|

||||

**Endpoint interno:** `qdrant.persistence.svc.cluster.local:6333`

|

||||

|

||||

Manifest in: `infra/cluster/persistence/qdrant/`

|

||||

|

||||

---

|

||||

|

||||

### Design decision — Multi-tenancy collections (Opzione B)

|

||||

|

||||

**Problema affrontato**: nominare le collections `martin_episodes`, `martin_knowledge`, `martin_preferences` avrebbe vincolato Pompeo ad essere esclusivamente un assistente personale singolo, rendendo impossibile — senza migration — estendere il sistema ad altri membri della famiglia in futuro.

|

||||

|

||||

**Scelta adottata**: architettura multi-tenant con 3 collection condivise e isolamento via campo `user_id` nel payload di ogni punto vettoriale.

|

||||

|

||||

```

|

||||

episodes ← user_id: "martin" | "shared" | <futuri utenti>

|

||||

knowledge ← user_id: "martin" | "shared" | <futuri utenti>

|

||||

preferences ← user_id: "martin" | "shared" | <futuri utenti>

|

||||

```

|

||||

|

||||

Il valore `"shared"` è riservato a dati della casa/famiglia visibili a tutti gli utenti (es. calendario condiviso, documenti di casa, finanze comuni). Le query n8n usano un filtro `should: [user_id=martin, user_id=shared]` per recuperare sia il contesto personale che quello condiviso.

|

||||

|

||||

**Vantaggi**: aggiungere un nuovo utente domani non richiede alcuna modifica infrastrutturale — solo includere il nuovo `user_id` negli upsert e nelle query.

|

||||

|

||||

---

|

||||

|

||||

### Collections create

|

||||

|

||||

Tutte e 3 le collections sono operative (status `green`):

|

||||

|

||||

| Collection | Contenuto |

|

||||

|---|---|

|

||||

| `episodes` | Fatti episodici con timestamp (email, IoT, calendario, conversazioni) |

|

||||

| `knowledge` | Documenti, note Outline, newsletter, knowledge base |

|

||||

| `preferences` | Preferenze, abitudini e pattern comportamentali per utente |

|

||||

|

||||

**Payload schema comune** (5 index su ogni collection):

|

||||

|

||||

| Campo | Tipo | Scopo |

|

||||

|---|---|---|

|

||||

| `user_id` | keyword | Filtro multi-tenant (`"martin"`, `"shared"`) |

|

||||

| `source` | keyword | Origine del dato (`"email"`, `"calendar"`, `"iot"`, `"paperless"`, …) |

|

||||

| `category` | keyword | Dominio semantico (`"finance"`, `"work"`, `"personal"`, …) |

|

||||

| `date` | datetime | Timestamp del fatto — filtrabile per range |

|

||||

| `action_required` | bool | Flag per il Proactive Arbiter |

|

||||

|

||||

**Dimensione vettori**: 1536 (compatibile con `text-embedding-3-small` via GitHub Copilot — bootstrap phase). Da rivedere alla migrazione verso `nomic-embed-text` su Ollama.

|

||||

|

||||

---

|

||||

|

||||

### Phase 0 — Stato al momento del deploy Qdrant

|

||||

|

||||

- [x] ~~Deploy **Qdrant** sul cluster~~

|

||||

- [x] ~~Creazione collections con multi-tenancy `user_id`~~

|

||||

- [x] ~~Payload indexes: `user_id`, `source`, `category`, `date`, `action_required`~~

|

||||

- [x] ~~Run **PostgreSQL migrations** su Patroni~~ ✅ completato nella sessione stessa

|

||||

|

||||

|

||||

## [2026-03-21] Jellyfin Playback Agent — Blocco A completato

|

||||

|

||||

### Nuovo workflow n8n

|

||||

|

||||

206

aws-lambda/README.md

Normal file

206

aws-lambda/README.md

Normal file

@@ -0,0 +1,206 @@

|

||||

# Piano di Sviluppo per la Lambda "Pompeo"

|

||||

|

||||

Questo documento descrive il piano di analisi, progettazione, implementazione e deploy per la funzione AWS Lambda che funge da ponte tra la skill Alexa "Pompeo" e l'istanza n8n del progetto ALPHA_PROJECT.

|

||||

|

||||

## 1. Obiettivo

|

||||

|

||||

La funzione Lambda ha un unico scopo: agire come un "ponte" (bridge) ultra-leggero e veloce. Il suo compito è ricevere le richieste inviate dalla skill Alexa, inoltrarle in modo sicuro al webhook di n8n che contiene la logica dell'agente, e restituire ad Alexa la risposta testuale (TTS) generata da n8n.

|

||||

|

||||

---

|

||||

|

||||

## 2. Analisi e Requisiti

|

||||

|

||||

### Requisiti Funzionali

|

||||

|

||||

1. **Ricezione Richiesta:** Deve essere in grado di ricevere e interpretare l'oggetto JSON inviato dal servizio Alexa.

|

||||

2. **Inoltro a n8n:** Deve inoltrare il corpo della richiesta Alexa a un webhook n8n predefinito.

|

||||

3. **Autenticazione (Opzionale ma Raccomandato):** La chiamata verso n8n dovrebbe includere un `secret token` per assicurare che solo la Lambda possa attivare il workflow.

|

||||

4. **Ricezione Risposta da n8n:** Deve attendere la risposta da n8n, che conterrà il testo da pronunciare.

|

||||

5. **Formattazione Risposta Alexa:** Deve costruire un oggetto JSON valido per Alexa, contenente la stringa TTS.

|

||||

6. **Gestione Errori:** Deve rispondere ad Alexa con un messaggio di errore cortese se n8n non è raggiungibile o restituisce un errore.

|

||||

|

||||

### Requisiti Non Funzionali

|

||||

|

||||

1. **Costo Zero:** L'intera infrastruttura deve operare sotto la soglia del piano gratuito di AWS (AWS Free Tier).

|

||||

* **AWS Lambda:** Il free tier include 1 milione di chiamate/mese e 400.000 GB-secondi, ampiamente sufficienti.

|

||||

* **API Gateway (se usata):** Il free tier include 1 milione di chiamate API/mese.

|

||||

2. **Distribuzione Privata:** La skill Alexa non deve essere pubblicata sullo store pubblico. Deve rimanere in stato "In Sviluppo" (`development`), rendendola automaticamente disponibile solo sui dispositivi Echo associati all'account Amazon dello sviluppatore.

|

||||

3. **Bassa Latenza:** La funzione deve essere scritta in un linguaggio performante per I/O (es. Python, Node.js) e avere una logica minimale per non introdurre ritardi.

|

||||

4. **Sicurezza:** La comunicazione tra Lambda e n8n deve avvenire su HTTPS. L'URL del webhook e il secret token devono essere gestiti tramite environment variables della Lambda, non hardcodati nel codice.

|

||||

|

||||

---

|

||||

|

||||

## 3. Progettazione (Design)

|

||||

|

||||

### Architettura

|

||||

|

||||

```

|

||||

+--------------+ 1. Utente parla +-----------------+ 3. Inoltra richiesta +---------------+

|

||||

| | ----------------------> | | --------------------------> | |

|

||||

| Echo Device | | AWS Lambda | | n8n Webhook |

|

||||

| | <---------------------- | | <------------------------- | |

|

||||

+--------------+ 5. Risposta TTS +-----------------+ 4. Risposta TTS +---------------+

|

||||

(Text-to-Speech)

|

||||

|

|

||||

| 2. Trigger

|

||||

|

|

||||

+---------------------+

|

||||

| Alexa Skills Kit |

|

||||

+---------------------+

|

||||

```

|

||||

|

||||

### Dettagli Tecnici

|

||||

|

||||

* **Runtime:** **Python 3.11**. È una scelta eccellente per task I/O-bound come questo, con un'ottima integrazione in ambiente AWS.

|

||||

* **Trigger:** Il trigger della Lambda sarà **"Alexa Skills Kit"**. Per sicurezza, verrà configurato per accettare chiamate solo dalla specifica Skill ID di "Pompeo".

|

||||

* **IAM Role:** Verrà creato un ruolo IAM con due policy:

|

||||

1. **Trust Policy:** Permette al servizio `lambda.amazonaws.com` di assumere questo ruolo.

|

||||

2. **Permissions Policy:** Si utilizzerà la policy gestita da AWS `AWSLambdaBasicExecutionRole`, che garantisce i permessi necessari per scrivere log su Amazon CloudWatch (`logs:CreateLogGroup`, `logs:CreateLogStream`, `logs:PutLogEvents`). Non sono necessari altri permessi.

|

||||

* **Logica del Codice (Python):**

|

||||

1. La funzione `lambda_handler(event, context)` sarà il punto di ingresso.

|

||||

2. Recupererà l'URL del webhook e il secret token dalle variabili d'ambiente (`os.environ.get('N8N_WEBHOOK_URL')`).

|

||||

3. Eseguirà una richiesta HTTP POST (usando la libreria `requests` o `urllib3`) verso l'URL di n8n.

|

||||

4. Il `body` della POST conterrà l'intero oggetto `event` ricevuto da Alexa. L'`header` conterrà il `secret token`.

|

||||

5. Attenderà la risposta di n8n. Se la risposta è 200 OK e contiene un JSON con un campo `tts_response`, procederà.

|

||||

6. Costruirà l'oggetto di risposta per Alexa, come da documentazione ufficiale.

|

||||

* **Gestione degli Errori:** In caso di timeout, codice di stato HTTP non-200, o JSON malformato da n8n, la Lambda costruirà una risposta di errore standard per Alexa (es. "Mi dispiace, si è verificato un problema. Riprova più tardi.").

|

||||

|

||||

---

|

||||

|

||||

## 4. Implementazione (Codice)

|

||||

|

||||

La Lambda richiederà un singolo file `index.py` e un file `requirements.txt` per le dipendenze.

|

||||

|

||||

**`requirements.txt`:**

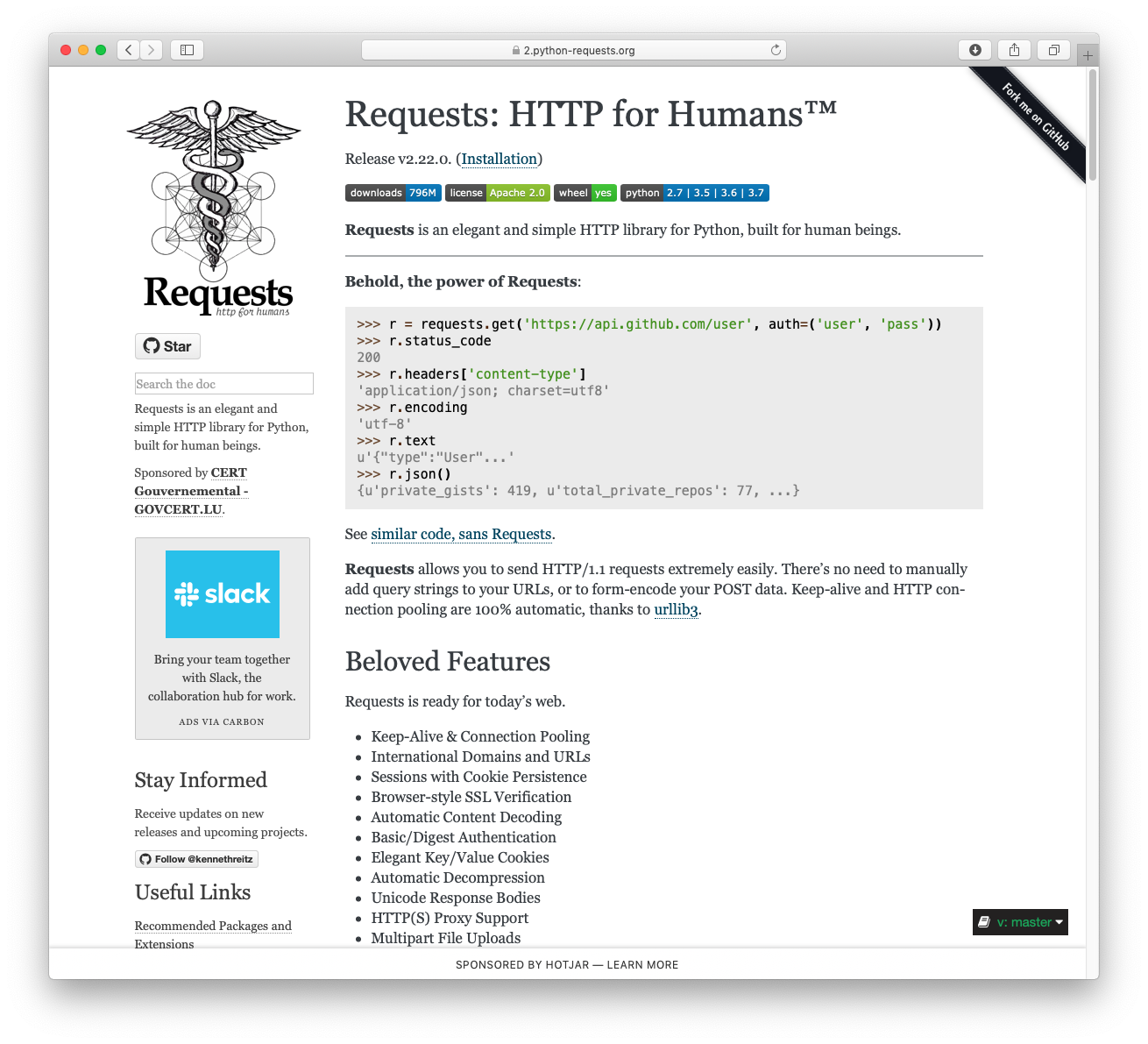

|

||||

```

|

||||

requests

|

||||

```

|

||||

|

||||

**`index.py` (Esempio Boilerplate):**

|

||||

```python

|

||||

import os

|

||||

import json

|

||||

import requests

|

||||

|

||||

# Recupera le variabili d'ambiente

|

||||

N8N_WEBHOOK_URL = os.environ.get('N8N_WEBHOOK_URL')

|

||||

N8N_SECRET_TOKEN = os.environ.get('N8N_SECRET_TOKEN')

|

||||

|

||||

def build_alexa_response(text):

|

||||

"""Costruisce la risposta JSON per Alexa."""

|

||||

return {

|

||||

'version': '1.0',

|

||||

'response': {

|

||||

'outputSpeech': {

|

||||

'type': 'PlainText',

|

||||

'text': text

|

||||

},

|

||||

'shouldEndSession': True

|

||||

}

|

||||

}

|

||||

|

||||

def lambda_handler(event, context):

|

||||

"""Punto di ingresso della funzione Lambda."""

|

||||

print(f"Richiesta ricevuta da Alexa: {json.dumps(event)}")

|

||||

|

||||

if not N8N_WEBHOOK_URL or not N8N_SECRET_TOKEN:

|

||||

print("Errore: Variabili d'ambiente non configurate.")

|

||||

return build_alexa_response("Errore di configurazione del server.")

|

||||

|

||||

headers = {

|

||||

'Content-Type': 'application/json',

|

||||

'X-N8N-Webhook-Secret': N8N_SECRET_TOKEN

|

||||

}

|

||||

|

||||

try:

|

||||

response = requests.post(

|

||||

N8N_WEBHOOK_URL,

|

||||

headers=headers,

|

||||

data=json.dumps(event),

|

||||

timeout=8 # Alexa attende max 10 secondi

|

||||

)

|

||||

response.raise_for_status() # Solleva un'eccezione per status code non-2xx

|

||||

|

||||

n8n_data = response.json()

|

||||

tts_text = n8n_data.get('tts_response', 'Nessuna risposta ricevuta da Pompeo.')

|

||||

|

||||

print(f"Risposta da n8n: {tts_text}")

|

||||

return build_alexa_response(tts_text)

|

||||

|

||||

except requests.exceptions.RequestException as e:

|

||||

print(f"Errore nella chiamata a n8n: {e}")

|

||||

return build_alexa_response("Mi dispiace, non riesco a contattare Pompeo in questo momento.")

|

||||

except Exception as e:

|

||||

print(f"Errore generico: {e}")

|

||||

return build_alexa_response("Si è verificato un errore inaspettato.")

|

||||

|

||||

```

|

||||

Il codice andrà zippato insieme alla cartella delle dipendenze installate localmente (`pip install -r requirements.txt -t .`).

|

||||

|

||||

---

|

||||

|

||||

## 5. Procedura Burocratica di Deploy e Configurazione

|

||||

|

||||

Questa è la checklist passo-passo per mettere tutto in funzione.

|

||||

|

||||

### Passo 1: Creazione del Ruolo IAM

|

||||

|

||||

1. Vai alla console AWS -> **IAM** -> **Roles**.

|

||||

2. Clicca su **Create role**.

|

||||

3. **Trusted entity type**: Seleziona **AWS service**.

|

||||

4. **Use case**: Seleziona **Lambda**, poi clicca **Next**.

|

||||

5. Nella pagina **Add permissions**, cerca e seleziona la policy `AWSLambdaBasicExecutionRole`. Clicca **Next**.

|

||||

6. **Role name**: Inserisci un nome, es. `PompeoAlexaLambdaRole`.

|

||||

7. Clicca **Create role**.

|

||||

|

||||

### Passo 2: Creazione della Funzione Lambda

|

||||

|

||||

1. Prepara il pacchetto di deploy:

|

||||

* Crea una cartella, es. `lambda_package`.

|

||||

* Salva il codice Python come `index.py` in quella cartella.

|

||||

* Salva `requirements.txt`.

|

||||

* Da terminale, nella cartella, esegui: `pip install -r requirements.txt --target .`

|

||||

* Zippa l'intero contenuto della cartella `lambda_package` (non la cartella stessa).

|

||||

2. Vai alla console AWS -> **Lambda**.

|

||||

3. Clicca **Create function**.

|

||||

4. Seleziona **Author from scratch**.

|

||||

5. **Function name**: `pompeo-alexa-bridge`.

|

||||

6. **Runtime**: Seleziona **Python 3.11**.

|

||||

7. **Architecture**: `x86_64`.

|

||||

8. **Permissions**: Espandi "Change default execution role", seleziona "Use an existing role" e scegli il ruolo `PompeoAlexaLambdaRole` creato prima.

|

||||

9. Clicca **Create function**.

|

||||

10. Nella pagina della funzione, vai su **Code source** e clicca **Upload from -> .zip file**. Carica lo zip creato.

|

||||

11. Vai in **Configuration -> Environment variables** e aggiungi:

|

||||

* `N8N_WEBHOOK_URL`: L'URL del tuo webhook n8n.

|

||||

* `N8N_SECRET_TOKEN`: Il token segreto che configurerai su n8n.

|

||||

|

||||

### Passo 3: Creazione della Skill Alexa "Pompeo"

|

||||

|

||||

1. Vai su [Alexa Developer Console](https://developer.amazon.com/alexa/console/ask).

|

||||

2. Clicca **Create Skill**.

|

||||

3. **Skill name**: `Pompeo`.

|

||||

4. **Choose a model**: `Custom`.

|

||||

5. **Choose a method to host**: `Provision your own`.

|

||||

6. Clicca **Create skill**.

|

||||

7. Una volta nella dashboard della skill, vai nel menu a sinistra su **Endpoint**.

|

||||

8. **Copia il tuo Skill ID**. Sarà una stringa simile a `amzn1.ask.skill.xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx`.

|

||||

|

||||

### Passo 4: Collegamento tra Skill e Lambda

|

||||

|

||||

1. Torna alla pagina della funzione **Lambda** su AWS.

|

||||

2. Nella sezione **Function overview**, clicca **Add trigger**.

|

||||

3. Come sorgente, seleziona **Alexa Skills Kit**.

|

||||

4. Abilita **Skill ID verification** e incolla lo **Skill ID** copiato dal portale Alexa.

|

||||

5. Clicca **Add**.

|

||||

6. Ora, nella pagina della Lambda, in alto a destra, **copia l'ARN (Amazon Resource Name)** della funzione.

|

||||

7. Torna alla pagina **Endpoint** della skill nella Alexa Developer Console.

|

||||

8. Seleziona **AWS Lambda ARN** come Service Endpoint Type.

|

||||

9. Incolla l'ARN della Lambda nel campo **Default Region**.

|

||||

10. Clicca **Save Endpoints** in alto.

|

||||

|

||||

### Passo 5: Test

|

||||

|

||||

1. Nella Alexa Developer Console, vai alla tab **Test**.

|

||||

2. Nella casella di testo, scrivi "apri pompeo" o qualsiasi altra frase di avvio.

|

||||

3. Controlla i log della funzione Lambda su **AWS CloudWatch** per vedere la richiesta in arrivo e la risposta.

|

||||

4. La skill è ora attiva in modalità "Development" e funzionerà su tutti i dispositivi Echo associati al tuo account Amazon, rimanendo completamente privata.

|

||||

BIN

aws-lambda/pompeo-alexa-bridge.zip

Normal file

BIN

aws-lambda/pompeo-alexa-bridge.zip

Normal file

Binary file not shown.

BIN

aws-lambda/src/81d243bd2c585b0f4821__mypyc.cp313-win_amd64.pyd

Normal file

BIN

aws-lambda/src/81d243bd2c585b0f4821__mypyc.cp313-win_amd64.pyd

Normal file

Binary file not shown.

BIN

aws-lambda/src/bin/normalizer.exe

Normal file

BIN

aws-lambda/src/bin/normalizer.exe

Normal file

Binary file not shown.

1

aws-lambda/src/certifi-2026.2.25.dist-info/INSTALLER

Normal file

1

aws-lambda/src/certifi-2026.2.25.dist-info/INSTALLER

Normal file

@@ -0,0 +1 @@

|

||||

pip

|

||||

78

aws-lambda/src/certifi-2026.2.25.dist-info/METADATA

Normal file

78

aws-lambda/src/certifi-2026.2.25.dist-info/METADATA

Normal file

@@ -0,0 +1,78 @@

|

||||

Metadata-Version: 2.4

|

||||

Name: certifi

|

||||

Version: 2026.2.25

|

||||

Summary: Python package for providing Mozilla's CA Bundle.

|

||||

Home-page: https://github.com/certifi/python-certifi

|

||||

Author: Kenneth Reitz

|

||||

Author-email: me@kennethreitz.com

|

||||

License: MPL-2.0

|

||||

Project-URL: Source, https://github.com/certifi/python-certifi

|

||||

Classifier: Development Status :: 5 - Production/Stable

|

||||

Classifier: Intended Audience :: Developers

|

||||

Classifier: License :: OSI Approved :: Mozilla Public License 2.0 (MPL 2.0)

|

||||

Classifier: Natural Language :: English

|

||||

Classifier: Programming Language :: Python

|

||||

Classifier: Programming Language :: Python :: 3

|

||||

Classifier: Programming Language :: Python :: 3 :: Only

|

||||

Classifier: Programming Language :: Python :: 3.7

|

||||

Classifier: Programming Language :: Python :: 3.8

|

||||

Classifier: Programming Language :: Python :: 3.9

|

||||

Classifier: Programming Language :: Python :: 3.10

|

||||

Classifier: Programming Language :: Python :: 3.11

|

||||

Classifier: Programming Language :: Python :: 3.12

|

||||

Classifier: Programming Language :: Python :: 3.13

|

||||

Classifier: Programming Language :: Python :: 3.14

|

||||

Requires-Python: >=3.7

|

||||

License-File: LICENSE

|

||||

Dynamic: author

|

||||

Dynamic: author-email

|

||||

Dynamic: classifier

|

||||

Dynamic: description

|

||||

Dynamic: home-page

|

||||

Dynamic: license

|

||||

Dynamic: license-file

|

||||

Dynamic: project-url

|

||||

Dynamic: requires-python

|

||||

Dynamic: summary

|

||||

|

||||

Certifi: Python SSL Certificates

|

||||

================================

|

||||

|

||||

Certifi provides Mozilla's carefully curated collection of Root Certificates for

|

||||

validating the trustworthiness of SSL certificates while verifying the identity

|

||||

of TLS hosts. It has been extracted from the `Requests`_ project.

|

||||

|

||||

Installation

|

||||

------------

|

||||

|

||||

``certifi`` is available on PyPI. Simply install it with ``pip``::

|

||||

|

||||

$ pip install certifi

|

||||

|

||||

Usage

|

||||

-----

|

||||

|

||||

To reference the installed certificate authority (CA) bundle, you can use the

|

||||

built-in function::

|

||||

|

||||

>>> import certifi

|

||||

|

||||

>>> certifi.where()

|

||||

'/usr/local/lib/python3.7/site-packages/certifi/cacert.pem'

|

||||

|

||||

Or from the command line::

|

||||

|

||||

$ python -m certifi

|

||||

/usr/local/lib/python3.7/site-packages/certifi/cacert.pem

|

||||

|

||||

Enjoy!

|

||||

|

||||

.. _`Requests`: https://requests.readthedocs.io/en/master/

|

||||

|

||||

Addition/Removal of Certificates

|

||||

--------------------------------

|

||||

|

||||

Certifi does not support any addition/removal or other modification of the

|

||||

CA trust store content. This project is intended to provide a reliable and

|

||||

highly portable root of trust to python deployments. Look to upstream projects

|

||||

for methods to use alternate trust.

|

||||

14

aws-lambda/src/certifi-2026.2.25.dist-info/RECORD

Normal file

14

aws-lambda/src/certifi-2026.2.25.dist-info/RECORD

Normal file

@@ -0,0 +1,14 @@

|

||||

certifi-2026.2.25.dist-info/INSTALLER,sha256=zuuue4knoyJ-UwPPXg8fezS7VCrXJQrAP7zeNuwvFQg,4

|

||||

certifi-2026.2.25.dist-info/METADATA,sha256=4NMuGXdg_hBiRA3paKVXYcDmE3VXEBWxTvCL2xlDyPU,2474

|

||||

certifi-2026.2.25.dist-info/RECORD,,

|

||||

certifi-2026.2.25.dist-info/WHEEL,sha256=YCfwYGOYMi5Jhw2fU4yNgwErybb2IX5PEwBKV4ZbdBo,91

|

||||

certifi-2026.2.25.dist-info/licenses/LICENSE,sha256=6TcW2mucDVpKHfYP5pWzcPBpVgPSH2-D8FPkLPwQyvc,989

|

||||

certifi-2026.2.25.dist-info/top_level.txt,sha256=KMu4vUCfsjLrkPbSNdgdekS-pVJzBAJFO__nI8NF6-U,8

|

||||

certifi/__init__.py,sha256=c9eaYufv1pSLl0Q8QNcMiMLLH4WquDcxdPyKjmI4opY,94

|

||||

certifi/__main__.py,sha256=xBBoj905TUWBLRGANOcf7oi6e-3dMP4cEoG9OyMs11g,243

|

||||

certifi/__pycache__/__init__.cpython-313.pyc,,

|

||||

certifi/__pycache__/__main__.cpython-313.pyc,,

|

||||

certifi/__pycache__/core.cpython-313.pyc,,

|

||||

certifi/cacert.pem,sha256=_JFloSQDJj5-v72te-ej6sD6XTJdPHBGXyjTaQByyig,272441

|

||||

certifi/core.py,sha256=XFXycndG5pf37ayeF8N32HUuDafsyhkVMbO4BAPWHa0,3394

|

||||

certifi/py.typed,sha256=47DEQpj8HBSa-_TImW-5JCeuQeRkm5NMpJWZG3hSuFU,0

|

||||

5

aws-lambda/src/certifi-2026.2.25.dist-info/WHEEL

Normal file

5

aws-lambda/src/certifi-2026.2.25.dist-info/WHEEL

Normal file

@@ -0,0 +1,5 @@

|

||||

Wheel-Version: 1.0

|

||||

Generator: setuptools (82.0.0)

|

||||

Root-Is-Purelib: true

|

||||

Tag: py3-none-any

|

||||

|

||||

20

aws-lambda/src/certifi-2026.2.25.dist-info/licenses/LICENSE

Normal file

20

aws-lambda/src/certifi-2026.2.25.dist-info/licenses/LICENSE

Normal file

@@ -0,0 +1,20 @@

|

||||

This package contains a modified version of ca-bundle.crt:

|

||||

|

||||

ca-bundle.crt -- Bundle of CA Root Certificates

|

||||

|

||||

This is a bundle of X.509 certificates of public Certificate Authorities

|

||||

(CA). These were automatically extracted from Mozilla's root certificates

|

||||

file (certdata.txt). This file can be found in the mozilla source tree:

|

||||

https://hg.mozilla.org/mozilla-central/file/tip/security/nss/lib/ckfw/builtins/certdata.txt

|

||||

It contains the certificates in PEM format and therefore

|

||||

can be directly used with curl / libcurl / php_curl, or with

|

||||

an Apache+mod_ssl webserver for SSL client authentication.

|

||||

Just configure this file as the SSLCACertificateFile.#

|

||||

|

||||

***** BEGIN LICENSE BLOCK *****

|

||||

This Source Code Form is subject to the terms of the Mozilla Public License,

|

||||

v. 2.0. If a copy of the MPL was not distributed with this file, You can obtain

|

||||

one at http://mozilla.org/MPL/2.0/.

|

||||

|

||||

***** END LICENSE BLOCK *****

|

||||

@(#) $RCSfile: certdata.txt,v $ $Revision: 1.80 $ $Date: 2011/11/03 15:11:58 $

|

||||

1

aws-lambda/src/certifi-2026.2.25.dist-info/top_level.txt

Normal file

1

aws-lambda/src/certifi-2026.2.25.dist-info/top_level.txt

Normal file

@@ -0,0 +1 @@

|

||||

certifi

|

||||

4

aws-lambda/src/certifi/__init__.py

Normal file

4

aws-lambda/src/certifi/__init__.py

Normal file

@@ -0,0 +1,4 @@

|

||||

from .core import contents, where

|

||||

|

||||

__all__ = ["contents", "where"]

|

||||

__version__ = "2026.02.25"

|

||||

12

aws-lambda/src/certifi/__main__.py

Normal file

12

aws-lambda/src/certifi/__main__.py

Normal file

@@ -0,0 +1,12 @@

|

||||

import argparse

|

||||

|

||||

from certifi import contents, where

|

||||

|

||||

parser = argparse.ArgumentParser()

|

||||

parser.add_argument("-c", "--contents", action="store_true")

|

||||

args = parser.parse_args()

|

||||

|

||||

if args.contents:

|

||||

print(contents())

|

||||

else:

|

||||

print(where())

|

||||

BIN

aws-lambda/src/certifi/__pycache__/__init__.cpython-313.pyc

Normal file

BIN

aws-lambda/src/certifi/__pycache__/__init__.cpython-313.pyc

Normal file

Binary file not shown.

BIN

aws-lambda/src/certifi/__pycache__/__main__.cpython-313.pyc

Normal file

BIN

aws-lambda/src/certifi/__pycache__/__main__.cpython-313.pyc

Normal file

Binary file not shown.

BIN

aws-lambda/src/certifi/__pycache__/core.cpython-313.pyc

Normal file

BIN

aws-lambda/src/certifi/__pycache__/core.cpython-313.pyc

Normal file

Binary file not shown.

4494

aws-lambda/src/certifi/cacert.pem

Normal file

4494

aws-lambda/src/certifi/cacert.pem

Normal file

File diff suppressed because it is too large

Load Diff

83

aws-lambda/src/certifi/core.py

Normal file

83

aws-lambda/src/certifi/core.py

Normal file

@@ -0,0 +1,83 @@

|

||||

"""

|

||||

certifi.py

|

||||

~~~~~~~~~~

|

||||

|

||||

This module returns the installation location of cacert.pem or its contents.

|

||||

"""

|

||||

import sys

|

||||

import atexit

|

||||

|

||||

def exit_cacert_ctx() -> None:

|

||||

_CACERT_CTX.__exit__(None, None, None) # type: ignore[union-attr]

|

||||

|

||||

|

||||

if sys.version_info >= (3, 11):

|

||||

|

||||

from importlib.resources import as_file, files

|

||||

|

||||

_CACERT_CTX = None

|

||||

_CACERT_PATH = None

|

||||

|

||||

def where() -> str:

|

||||

# This is slightly terrible, but we want to delay extracting the file

|

||||

# in cases where we're inside of a zipimport situation until someone

|

||||

# actually calls where(), but we don't want to re-extract the file

|

||||

# on every call of where(), so we'll do it once then store it in a

|

||||

# global variable.

|

||||

global _CACERT_CTX

|

||||

global _CACERT_PATH

|

||||

if _CACERT_PATH is None:

|

||||

# This is slightly janky, the importlib.resources API wants you to

|

||||

# manage the cleanup of this file, so it doesn't actually return a

|

||||

# path, it returns a context manager that will give you the path

|

||||

# when you enter it and will do any cleanup when you leave it. In

|

||||

# the common case of not needing a temporary file, it will just

|

||||

# return the file system location and the __exit__() is a no-op.

|

||||

#

|

||||

# We also have to hold onto the actual context manager, because

|

||||

# it will do the cleanup whenever it gets garbage collected, so

|

||||

# we will also store that at the global level as well.

|

||||

_CACERT_CTX = as_file(files("certifi").joinpath("cacert.pem"))

|

||||

_CACERT_PATH = str(_CACERT_CTX.__enter__())

|

||||

atexit.register(exit_cacert_ctx)

|

||||

|

||||

return _CACERT_PATH

|

||||

|

||||

def contents() -> str:

|

||||

return files("certifi").joinpath("cacert.pem").read_text(encoding="ascii")

|

||||

|

||||

else:

|

||||

|

||||

from importlib.resources import path as get_path, read_text

|

||||

|

||||

_CACERT_CTX = None

|

||||

_CACERT_PATH = None

|

||||

|

||||

def where() -> str:

|

||||

# This is slightly terrible, but we want to delay extracting the

|

||||

# file in cases where we're inside of a zipimport situation until

|

||||

# someone actually calls where(), but we don't want to re-extract

|

||||

# the file on every call of where(), so we'll do it once then store

|

||||

# it in a global variable.

|

||||

global _CACERT_CTX

|

||||

global _CACERT_PATH

|

||||

if _CACERT_PATH is None:

|

||||

# This is slightly janky, the importlib.resources API wants you

|

||||

# to manage the cleanup of this file, so it doesn't actually

|

||||

# return a path, it returns a context manager that will give

|

||||

# you the path when you enter it and will do any cleanup when

|

||||

# you leave it. In the common case of not needing a temporary

|

||||

# file, it will just return the file system location and the

|

||||

# __exit__() is a no-op.

|

||||

#

|

||||

# We also have to hold onto the actual context manager, because

|

||||

# it will do the cleanup whenever it gets garbage collected, so

|

||||

# we will also store that at the global level as well.

|

||||

_CACERT_CTX = get_path("certifi", "cacert.pem")

|

||||

_CACERT_PATH = str(_CACERT_CTX.__enter__())

|

||||

atexit.register(exit_cacert_ctx)

|

||||

|

||||

return _CACERT_PATH

|

||||

|

||||

def contents() -> str:

|

||||

return read_text("certifi", "cacert.pem", encoding="ascii")

|

||||

0

aws-lambda/src/certifi/py.typed

Normal file

0

aws-lambda/src/certifi/py.typed

Normal file

@@ -0,0 +1 @@

|

||||

pip

|

||||

798

aws-lambda/src/charset_normalizer-3.4.6.dist-info/METADATA

Normal file

798

aws-lambda/src/charset_normalizer-3.4.6.dist-info/METADATA

Normal file

@@ -0,0 +1,798 @@

|

||||

Metadata-Version: 2.4

|

||||

Name: charset-normalizer

|

||||

Version: 3.4.6

|

||||

Summary: The Real First Universal Charset Detector. Open, modern and actively maintained alternative to Chardet.

|

||||

Author-email: "Ahmed R. TAHRI" <tahri.ahmed@proton.me>

|

||||

Maintainer-email: "Ahmed R. TAHRI" <tahri.ahmed@proton.me>

|

||||

License: MIT

|

||||

Project-URL: Changelog, https://github.com/jawah/charset_normalizer/blob/master/CHANGELOG.md

|

||||

Project-URL: Documentation, https://charset-normalizer.readthedocs.io/

|

||||

Project-URL: Code, https://github.com/jawah/charset_normalizer

|

||||

Project-URL: Issue tracker, https://github.com/jawah/charset_normalizer/issues

|

||||

Keywords: encoding,charset,charset-detector,detector,normalization,unicode,chardet,detect

|

||||

Classifier: Development Status :: 5 - Production/Stable

|

||||

Classifier: Intended Audience :: Developers

|

||||

Classifier: Operating System :: OS Independent

|

||||

Classifier: Programming Language :: Python

|

||||

Classifier: Programming Language :: Python :: 3

|

||||

Classifier: Programming Language :: Python :: 3.7

|

||||

Classifier: Programming Language :: Python :: 3.8

|

||||

Classifier: Programming Language :: Python :: 3.9

|

||||

Classifier: Programming Language :: Python :: 3.10

|

||||

Classifier: Programming Language :: Python :: 3.11

|

||||

Classifier: Programming Language :: Python :: 3.12

|

||||

Classifier: Programming Language :: Python :: 3.13

|

||||

Classifier: Programming Language :: Python :: 3.14

|

||||

Classifier: Programming Language :: Python :: 3 :: Only

|

||||

Classifier: Programming Language :: Python :: Implementation :: CPython

|

||||

Classifier: Programming Language :: Python :: Implementation :: PyPy

|

||||

Classifier: Topic :: Text Processing :: Linguistic

|

||||

Classifier: Topic :: Utilities

|

||||

Classifier: Typing :: Typed

|

||||

Requires-Python: >=3.7

|

||||

Description-Content-Type: text/markdown

|

||||

License-File: LICENSE

|

||||

Provides-Extra: unicode-backport

|

||||

Dynamic: license-file

|

||||

|

||||

<h1 align="center">Charset Detection, for Everyone 👋</h1>

|

||||

|

||||

<p align="center">

|

||||

<sup>The Real First Universal Charset Detector</sup><br>

|

||||

<a href="https://pypi.org/project/charset-normalizer">

|

||||

<img src="https://img.shields.io/pypi/pyversions/charset_normalizer.svg?orange=blue" />

|

||||

</a>

|

||||

<a href="https://pepy.tech/project/charset-normalizer/">

|

||||

<img alt="Download Count Total" src="https://static.pepy.tech/badge/charset-normalizer/month" />

|

||||

</a>

|

||||

<a href="https://bestpractices.coreinfrastructure.org/projects/7297">

|

||||

<img src="https://bestpractices.coreinfrastructure.org/projects/7297/badge">

|

||||

</a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<sup><i>Featured Packages</i></sup><br>

|

||||

<a href="https://github.com/jawah/niquests">

|

||||

<img alt="Static Badge" src="https://img.shields.io/badge/Niquests-Most_Advanced_HTTP_Client-cyan">

|

||||

</a>

|

||||

<a href="https://github.com/jawah/wassima">

|

||||

<img alt="Static Badge" src="https://img.shields.io/badge/Wassima-Certifi_Replacement-cyan">

|

||||

</a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<sup><i>In other language (unofficial port - by the community)</i></sup><br>

|

||||

<a href="https://github.com/nickspring/charset-normalizer-rs">

|

||||

<img alt="Static Badge" src="https://img.shields.io/badge/Rust-red">

|

||||

</a>

|

||||

</p>

|

||||

|

||||

> A library that helps you read text from an unknown charset encoding.<br /> Motivated by `chardet`,

|

||||

> I'm trying to resolve the issue by taking a new approach.

|

||||

> All IANA character set names for which the Python core library provides codecs are supported.

|

||||

> You can also register your own set of codecs, and yes, it would work as-is.

|

||||

|

||||

<p align="center">

|

||||

>>>>> <a href="https://charsetnormalizerweb.ousret.now.sh" target="_blank">👉 Try Me Online Now, Then Adopt Me 👈 </a> <<<<<

|

||||

</p>

|

||||

|

||||

This project offers you an alternative to **Universal Charset Encoding Detector**, also known as **Chardet**.

|

||||

|

||||

| Feature | [Chardet](https://github.com/chardet/chardet) | Charset Normalizer | [cChardet](https://github.com/PyYoshi/cChardet) |

|

||||

|--------------------------------------------------|:---------------------------------------------:|:-----------------------------------------------------------------------------------------------:|:-----------------------------------------------:|

|

||||

| `Fast` | ✅ | ✅ | ✅ |

|

||||

| `Universal`[^1] | ❌ | ✅ | ❌ |

|

||||

| `Reliable` **without** distinguishable standards | ✅ | ✅ | ✅ |

|

||||

| `Reliable` **with** distinguishable standards | ✅ | ✅ | ✅ |

|

||||

| `License` | _Disputed_[^2]<br>_restrictive_ | MIT | MPL-1.1<br>_restrictive_ |

|

||||

| `Native Python` | ✅ | ✅ | ❌ |

|

||||

| `Detect spoken language` | ✅ | ✅ | N/A |

|

||||

| `UnicodeDecodeError Safety` | ✅ | ✅ | ❌ |

|

||||

| `Whl Size (min)` | 500 kB | 150 kB | ~200 kB |

|

||||

| `Supported Encoding` | 99 | [99](https://charset-normalizer.readthedocs.io/en/latest/user/support.html#supported-encodings) | 40 |

|

||||

| `Can register custom encoding` | ❌ | ✅ | ❌ |

|

||||

|

||||

<p align="center">

|

||||

<img src="https://i.imgflip.com/373iay.gif" alt="Reading Normalized Text" width="226"/><img src="https://media.tenor.com/images/c0180f70732a18b4965448d33adba3d0/tenor.gif" alt="Cat Reading Text" width="200"/>

|

||||

</p>

|

||||

|

||||

[^1]: They are clearly using specific code for a specific encoding even if covering most of used one.

|

||||

[^2]: Chardet 7.0+ was relicensed from LGPL-2.1 to MIT following an AI-assisted rewrite. This relicensing is disputed on two independent grounds: **(a)** the original author [contests](https://github.com/chardet/chardet/issues/327) that the maintainer had the right to relicense, arguing the rewrite is a derivative work of the LGPL-licensed codebase since it was not a clean room implementation; **(b)** the copyright claim itself is [questionable](https://github.com/chardet/chardet/issues/334) given the code was primarily generated by an LLM, and AI-generated output may not be copyrightable under most jurisdictions. Either issue alone could undermine the MIT license. Beyond licensing, the rewrite raises questions about responsible use of AI in open source: key architectural ideas pioneered by charset-normalizer - notably decode-first validity filtering (our foundational approach since v1) and encoding pairwise similarity with the same algorithm and threshold — surfaced in chardet 7 without acknowledgment. The project also imported test files from charset-normalizer to train and benchmark against it, then claimed superior accuracy on those very files. Charset-normalizer has always been MIT-licensed, encoding-agnostic by design, and built on a verifiable human-authored history.

|

||||

|

||||

## ⚡ Performance

|

||||

|

||||

This package offer better performances (99th, and 95th) against Chardet. Here are some numbers.

|

||||

|

||||

| Package | Accuracy | Mean per file (ms) | File per sec (est) |

|

||||

|---------------------------------------------------|:--------:|:------------------:|:------------------:|

|

||||

| [chardet 7.1](https://github.com/chardet/chardet) | 89 % | 3 ms | 333 file/sec |

|

||||

| charset-normalizer | **97 %** | 3 ms | 333 file/sec |

|

||||

|

||||

| Package | 99th percentile | 95th percentile | 50th percentile |

|

||||

|---------------------------------------------------|:---------------:|:---------------:|:---------------:|

|

||||

| [chardet 7.1](https://github.com/chardet/chardet) | 32 ms | 17 ms | < 1 ms |

|

||||

| charset-normalizer | 16 ms | 10 ms | 1 ms |

|

||||

|

||||

_updated as of March 2026 using CPython 3.12, Charset-Normalizer 3.4.6, and Chardet 7.1.0_

|

||||

|

||||

~Chardet's performance on larger file (1MB+) are very poor. Expect huge difference on large payload.~ No longer the case since Chardet 7.0+

|

||||

|

||||

> Stats are generated using 400+ files using default parameters. More details on used files, see GHA workflows.

|

||||

> And yes, these results might change at any time. The dataset can be updated to include more files.

|

||||

> The actual delays heavily depends on your CPU capabilities. The factors should remain the same.

|

||||

> Chardet claims on his documentation to have a greater accuracy than us based on the dataset they trained Chardet on(...)

|

||||

> Well, it's normal, the opposite would have been worrying. Whereas charset-normalizer don't train on anything, our solution

|

||||

> is based on a completely different algorithm, still heuristic through, it does not need weights across every encoding tables.

|

||||

|

||||

## ✨ Installation

|

||||

|

||||

Using pip:

|

||||

|

||||

```sh

|

||||

pip install charset-normalizer -U

|

||||

```

|

||||

|

||||

## 🚀 Basic Usage

|

||||

|

||||

### CLI

|

||||

This package comes with a CLI.

|

||||

|

||||

```

|

||||

usage: normalizer [-h] [-v] [-a] [-n] [-m] [-r] [-f] [-t THRESHOLD]

|

||||

file [file ...]

|

||||

|

||||

The Real First Universal Charset Detector. Discover originating encoding used

|

||||

on text file. Normalize text to unicode.

|

||||

|

||||

positional arguments:

|

||||

files File(s) to be analysed

|

||||

|

||||

optional arguments:

|

||||

-h, --help show this help message and exit

|

||||

-v, --verbose Display complementary information about file if any.

|

||||

Stdout will contain logs about the detection process.

|

||||

-a, --with-alternative

|

||||

Output complementary possibilities if any. Top-level

|

||||

JSON WILL be a list.

|

||||

-n, --normalize Permit to normalize input file. If not set, program

|

||||

does not write anything.

|

||||

-m, --minimal Only output the charset detected to STDOUT. Disabling

|

||||

JSON output.

|

||||

-r, --replace Replace file when trying to normalize it instead of

|

||||

creating a new one.

|

||||

-f, --force Replace file without asking if you are sure, use this

|

||||

flag with caution.

|

||||

-t THRESHOLD, --threshold THRESHOLD

|

||||

Define a custom maximum amount of chaos allowed in

|

||||

decoded content. 0. <= chaos <= 1.

|

||||

--version Show version information and exit.

|

||||

```

|

||||

|

||||

```bash

|

||||

normalizer ./data/sample.1.fr.srt

|

||||

```

|

||||

|

||||

or

|

||||

|

||||

```bash

|

||||

python -m charset_normalizer ./data/sample.1.fr.srt

|

||||

```

|

||||

|

||||

🎉 Since version 1.4.0 the CLI produce easily usable stdout result in JSON format.

|

||||

|

||||

```json

|

||||

{

|

||||

"path": "/home/default/projects/charset_normalizer/data/sample.1.fr.srt",

|

||||

"encoding": "cp1252",

|

||||

"encoding_aliases": [

|

||||

"1252",

|

||||

"windows_1252"

|

||||

],

|

||||

"alternative_encodings": [

|

||||

"cp1254",

|

||||

"cp1256",

|

||||

"cp1258",

|

||||

"iso8859_14",

|

||||

"iso8859_15",

|

||||

"iso8859_16",

|

||||

"iso8859_3",

|

||||

"iso8859_9",

|

||||

"latin_1",

|

||||

"mbcs"

|

||||

],

|

||||

"language": "French",

|

||||

"alphabets": [

|

||||

"Basic Latin",

|

||||

"Latin-1 Supplement"

|

||||

],

|

||||

"has_sig_or_bom": false,

|

||||

"chaos": 0.149,

|

||||

"coherence": 97.152,

|

||||

"unicode_path": null,

|

||||

"is_preferred": true

|

||||

}

|

||||

```

|

||||

|

||||

### Python

|

||||

*Just print out normalized text*

|

||||

```python

|

||||

from charset_normalizer import from_path

|

||||

|

||||

results = from_path('./my_subtitle.srt')

|

||||

|

||||

print(str(results.best()))

|

||||

```

|

||||

|

||||

*Upgrade your code without effort*

|

||||

```python

|

||||

from charset_normalizer import detect

|

||||

```

|

||||

|

||||

The above code will behave the same as **chardet**. We ensure that we offer the best (reasonable) BC result possible.

|

||||

|

||||

See the docs for advanced usage : [readthedocs.io](https://charset-normalizer.readthedocs.io/en/latest/)

|

||||

|

||||

## 😇 Why

|

||||

|

||||

When I started using Chardet, I noticed that it was not suited to my expectations, and I wanted to propose a

|

||||

reliable alternative using a completely different method. Also! I never back down on a good challenge!

|

||||

|

||||

I **don't care** about the **originating charset** encoding, because **two different tables** can

|

||||

produce **two identical rendered string.**

|

||||

What I want is to get readable text, the best I can.

|

||||

|

||||

In a way, **I'm brute forcing text decoding.** How cool is that ? 😎

|

||||

|

||||

Don't confuse package **ftfy** with charset-normalizer or chardet. ftfy goal is to repair Unicode string whereas charset-normalizer to convert raw file in unknown encoding to unicode.

|

||||

|

||||

## 🍰 How

|

||||

|

||||

- Discard all charset encoding table that could not fit the binary content.

|

||||

- Measure noise, or the mess once opened (by chunks) with a corresponding charset encoding.

|

||||

- Extract matches with the lowest mess detected.

|

||||

- Additionally, we measure coherence / probe for a language.

|

||||

|

||||

**Wait a minute**, what is noise/mess and coherence according to **YOU ?**

|

||||

|

||||

*Noise :* I opened hundred of text files, **written by humans**, with the wrong encoding table. **I observed**, then

|

||||

**I established** some ground rules about **what is obvious** when **it seems like** a mess (aka. defining noise in rendered text).

|

||||

I know that my interpretation of what is noise is probably incomplete, feel free to contribute in order to

|

||||

improve or rewrite it.

|

||||

|

||||

*Coherence :* For each language there is on earth, we have computed ranked letter appearance occurrences (the best we can). So I thought

|

||||

that intel is worth something here. So I use those records against decoded text to check if I can detect intelligent design.

|

||||

|

||||

## ⚡ Known limitations

|

||||

|

||||

- Language detection is unreliable when text contains two or more languages sharing identical letters. (eg. HTML (english tags) + Turkish content (Sharing Latin characters))

|

||||

- Every charset detector heavily depends on sufficient content. In common cases, do not bother run detection on very tiny content.

|

||||

|

||||

## ⚠️ About Python EOLs

|

||||

|

||||

**If you are running:**

|

||||

|

||||

- Python >=2.7,<3.5: Unsupported

|

||||

- Python 3.5: charset-normalizer < 2.1

|

||||

- Python 3.6: charset-normalizer < 3.1

|

||||

|

||||

Upgrade your Python interpreter as soon as possible.

|

||||

|

||||

## 👤 Contributing

|

||||

|

||||

Contributions, issues and feature requests are very much welcome.<br />

|

||||

Feel free to check [issues page](https://github.com/ousret/charset_normalizer/issues) if you want to contribute.

|

||||

|